OR

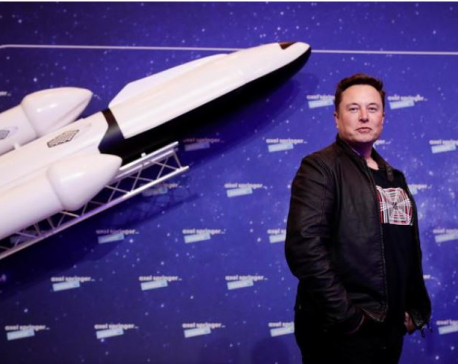

Musk and experts call for halt in 'giant AI experiments'

Published On: March 30, 2023 08:06 AM NPT By: AFP/RSS

PARIS, March 30: Billionaire mogul Elon Musk and a range of experts called on Wednesday for a pause in the development of powerful artificial intelligence (AI) systems to allow time to make sure they are safe.

An open letter, signed by more than 1,000 people so far including Musk and Apple co-founder Steve Wozniak, was prompted by the release of GPT-4 from San Francisco firm OpenAI.

The company says its latest model is much more powerful than the previous version, which was used to power ChatGPT, a bot capable of generating tracts of text from the briefest of prompts.

"AI systems with human-competitive intelligence can pose profound risks to society and humanity," said the open letter titled "Pause Giant AI Experiments".

"Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable," it said.

Musk was an initial investor in OpenAI, spent years on its board, and his car firm Tesla develops AI systems to help power its self-driving technology, among other applications.

The letter, hosted by the Musk-funded Future of Life Institute, was signed by prominent critics as well as competitors of OpenAI like Stability AI chief Emad Mostaque.

- 'Trustworthy and loyal' -

The letter quoted from a blog written by OpenAI founder Sam Altman, who suggested that "at some point, it may be important to get independent review before starting to train future systems".

"We agree. That point is now," the authors of the open letter wrote.

"Therefore, we call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4."

They called for governments to step in and impose a moratorium if companies failed to agree.

The six months should be used to develop safety protocols, AI governance systems, and refocus research on ensuring AI systems are more accurate, safe, "trustworthy and loyal".

The letter did not detail the dangers revealed by GPT-4.

But researchers including Gary Marcus of New York University, who signed the letter, have long argued that chatbots are great liars and have the potential to be superspreaders of disinformation.

However, author Cory Doctorow has compared the AI industry to a "pump and dump" scheme, arguing that both the potential and the threat of AI systems have been massively overhyped.

You May Like This

Elon Musk's SpaceX set for debut flight of Starship rocket system to space

BOCA CHICA, Texas, April 17: Elon Musk's SpaceX made final preparations early on Monday to launch its powerful new Starship... Read More...

Elon Musk buys Twitter for $44B and will take it private

SAN FRANCISCO, CALIFORNIA, April 25: Elon Musk reached an agreement to buy Twitter for roughly $44 billion on Monday, promising a... Read More...

Elon Musk leaves behind Amazon's Bezos to become world's richest person

LONDON, Jan 8: Tesla Inc chief and billionaire entrepreneur Elon Musk surpassed Amazon.com Inc’s top boss Jeff Bezos to become... Read More...

Just In

- Rainbow tourism int'l conference kicks off

- Over 200,000 devotees throng Maha Kumbha Mela at Barahakshetra

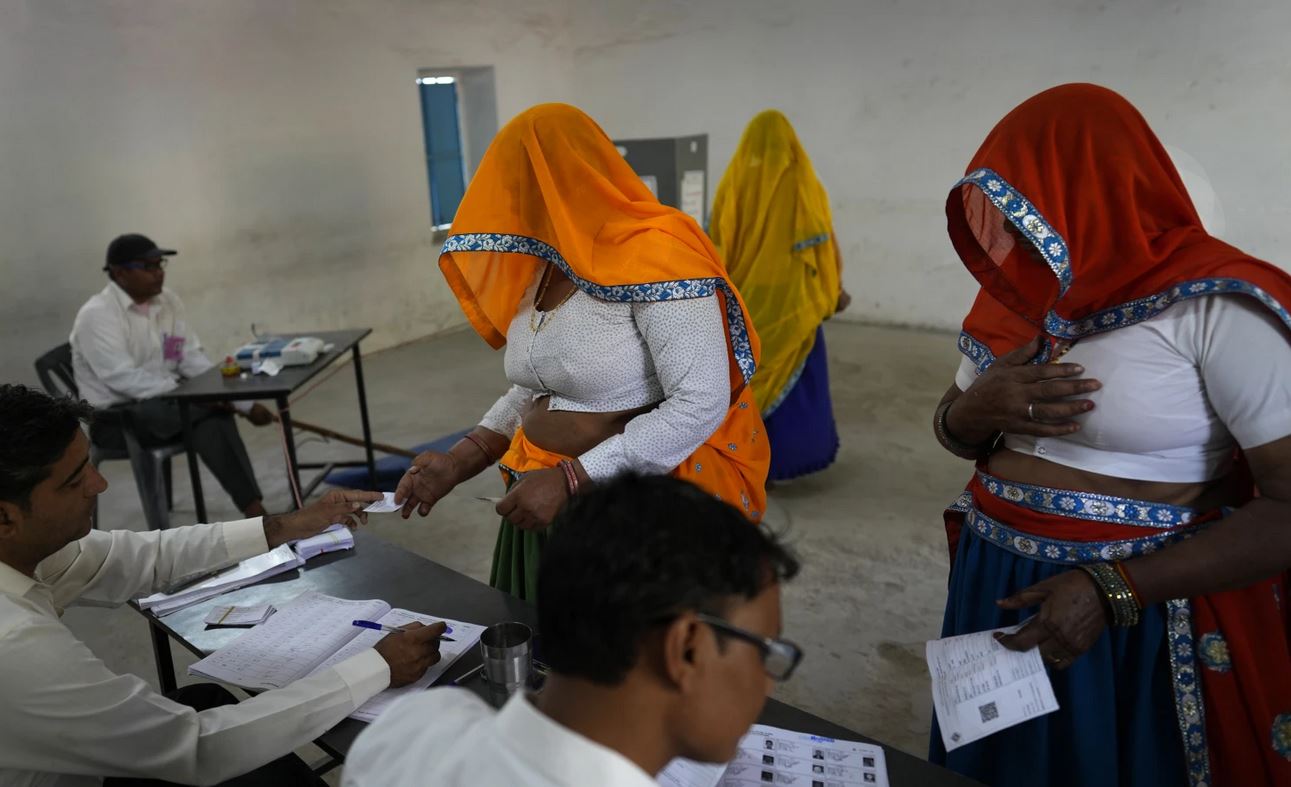

- Indians vote in the first phase of the world’s largest election as Modi seeks a third term

- Kushal Dixit selected for London Marathon

- Nepal faces Hong Kong today for ACC Emerging Teams Asia Cup

- 286 new industries registered in Nepal in first nine months of current FY, attracting Rs 165 billion investment

- UML's National Convention Representatives Council meeting today

- Gandaki Province CM assigns ministerial portfolios to Hari Bahadur Chuman and Deepak Manange

_20220508065243.jpg)

Leave A Comment